Advertisement

Machines have always struggled with human language. We speak with nuance, context, and emotion—things computers historically couldn't grasp. Early models treated language like code, focusing on word order or frequency. That worked for basic tasks but fell apart when deeper meaning was needed. Then, BERT came along. Developed by Google, BERT reads language more like people do—understanding the full picture rather than just scanning from left to right. It doesn't guess based on isolated words. It learns from everything around them. BERT didn't just improve results; it changed how machines understand. It marked a turning point for modern natural language processing.

BERT is a deep learning model built on the Transformer architecture. What made BERT special is its bidirectional approach. While older models read text left-to-right or right-to-left, BERT looks at both directions simultaneously. This allows it to understand context more naturally.

Take the sentence, “The bass was too loud to enjoy.” The word “bass” could refer to music or a fish. BERT looks at the entire sentence to figure out the intended meaning. This kind of nuanced understanding was hard for earlier models.

The model was pre-trained on vast text datasets, which helped it learn general language patterns. Once trained, BERT could be fine-tuned on smaller datasets for specific tasks. This flexibility meant that even without tons of custom data, developers could apply BERT to a wide range of problems and see strong results.

When it was released, BERT outperformed existing models across multiple natural language understanding benchmarks. It marked a shift away from simple pattern matching toward true contextual understanding, making it one of the most impactful models in natural language processing history.

At its core, BERT uses only the encoder half of the Transformer architecture. Transformers introduced the concept of self-attention—where each word in a sentence can pay attention to every other word. This helps the model understand how words relate to each other within a sentence.

During training, BERT uses two tasks. The first is Masked Language Modeling (MLM). Here, random words in a sentence are replaced with a [MASK] token, and the model learns to predict the missing words based on context. This forces it to understand relationships between words rather than memorize them.

The second task is Next Sentence Prediction (NSP), where BERT is given two sentences and asked if the second naturally follows the first. This helps the model learn how ideas connect across sentences, which is useful for tasks like reading comprehension.

After pre-training on large datasets, such as Wikipedia, BERT is fine-tuned for specific use cases. For example, in a sentiment analysis task, the model adjusts slightly using labelled data showing which sentences are positive or negative. This allows BERT to be reused across tasks without needing to retrain from scratch every time.

BERT’s structure includes multiple layers and attention heads. These components allow it to capture different kinds of information at different depths, giving it a layered understanding of language.

BERT has found use in many areas. One of the most visible examples is Google Search. When BERT was added to the algorithm, search results improved, especially for longer or more conversational queries. Instead of matching keywords, BERT helped the system understand the meaning behind the words.

Beyond search, BERT is widely used in chatbots, document classification, recommendation systems, and more. In healthcare, it helps analyze patient notes. In legal services, it supports contract review. In customer service, BERT helps categorize queries and route them to the right department.

The release of the Transformers library by Hugging Face played a key role in making BERT accessible. With just a few lines of code, developers could fine-tune BERT for their projects. This led to a surge in NLP development and experimentation, even among teams without deep machine-learning expertise.

BERT has also inspired a range of related models. DistilBERT is a smaller, faster version with nearly the same performance. RoBERTa retrains BERT without the NSP task and tweaks other settings for better results. ALBERT reduces the number of parameters for more efficient use. These variations show how the core idea behind BERT can be adjusted for different needs without losing its strengths.

In each of these uses, the state of the art NLP model label fits because BERT isn’t limited to one task. It serves as a base for many specialized systems that need reliable language understanding.

BERT has a strong grasp of context and works well on a wide range of tasks, but it’s not perfect. One issue is its size. The base version has 110 million parameters, and the large version has 340 million. This makes it demanding in terms of memory and processing, which can be a barrier in some environments.

Another limitation is that BERT doesn’t generate language—it classifies or labels it. So, it's not suited for tasks like writing or summarizing content. For that, models designed for generation, such as GPT, are more appropriate.

BERT’s training is also fixed. It doesn’t learn new information unless retrained. In settings where knowledge changes frequently, that’s a downside. Updating BERT can be done, but it’s not automatic.

Despite these limits, BERT remains one of the most effective models for tasks that involve understanding what text means. From sentiment analysis to extracting answers from paragraphs, it provides reliable results that are hard to match with simpler tools.

BERT changed how machines approach language. Instead of treating words as isolated parts, it looks at how everything fits together. This helped improve systems like search engines, chat assistants, and many other tools that rely on natural language. With its deep understanding of context and flexibility for different tasks, BERT has become a foundation for modern NLP. Even with newer models emerging, BERT’s structure, training method, and impact continue to shape how developers build language systems. It’s a key example of how machine learning can move closer to understanding human communication, not just translating it into code.

Advertisement

How explainable artificial intelligence helps AI and ML engineers build transparent and trustworthy models. Discover practical techniques and challenges of XAI for engineers in real-world applications

Prepare for your Snowflake interview with key questions and expert answers covering Snowflake architecture, virtual warehouses, time travel, micro-partitions, concurrency, and more

Looking for the next big thing in Python development? Explore upcoming libraries like PyScript, TensorFlow Quantum, FastAPI 2.0, and more that will redefine how you build and deploy systems in 2025

Improve automatic speech recognition accuracy by boosting Wav2Vec2 with an n-gram language model using Transformers and pyctcdecode. Learn how shallow fusion enhances transcription quality

Learn how Redis OM for Python transforms Redis into a model-driven, queryable data layer with real-time performance. Define, store, and query structured data easily—no raw commands needed

Gradio is joining Hugging Face in a move that simplifies machine learning interfaces and model sharing. Discover how this partnership makes AI tools more accessible for developers, educators, and users

Are you running into frustrating bugs with PyTorch? Discover the common mistakes developers make and learn how to avoid them for smoother machine learning projects

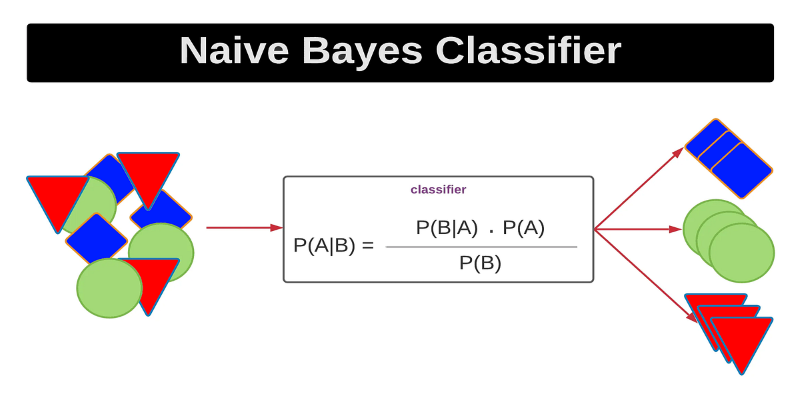

Curious how a simple algorithm can deliver strong ML results with minimal tuning? This beginner’s guide breaks down Naive Bayes—its logic, types, code examples, and where it really shines

Wondering how Docker works or why it’s everywhere in devops? Learn how containers simplify app deployment—and how to get started in minutes

How Sempre Health is accelerating its ML roadmap with the help of the Expert Acceleration Program, improving model deployment, patient outcomes, and internal efficiency

The Hugging Face Fellowship Program offers early-career developers paid opportunities, mentorship, and real project work to help them grow within the inclusive AI community

Confused about DAO and DTO in Python? Learn how these simple patterns can clean up your code, reduce duplication, and improve long-term maintainability