Advertisement

Artificial Intelligence has evolved beyond experimental stages, powering everything from medical diagnostics to financial predictions. But as machine learning models grow more complex, their decision-making processes become harder to understand. Engineers often create highly accurate models without being able to explain their outcomes clearly.

Explainable Artificial Intelligence, or XAI, addresses this gap by making models more transparent and interpretable while preserving much of their predictive strength. For AI and ML engineers, XAI is increasingly necessary, helping to ensure accountability and trust in automated decisions, especially where outcomes impact real people and carry ethical or legal implications.

AI models often function as black boxes — data goes in, predictions come out, but the internal reasoning stays hidden, particularly with deep learning and ensemble methods. High accuracy alone is not enough when a wrong or biased decision can harm someone. In healthcare, credit scoring, or hiring, the ability to explain how a prediction was made is critical for fairness, error detection, and compliance.

For engineers, explainability has practical benefits. It can uncover when a model is using irrelevant or biased features, such as geographic location instead of medical indicators, in health predictions. This insight allows engineers to improve model design and avoid unintended harm.

Trust is another reason explainability matters. Stakeholders and end-users are more likely to act on AI-driven recommendations when they understand the reasoning behind them. Regulatory pressure is growing, too. In some regions, laws already require that automated decisions affecting individuals be explainable, which engineers must keep in mind when developing solutions.

There are several ways to make AI models explainable, and the choice depends on the application and type of model. Broadly, XAI methods fall into two groups: interpretable models and post-hoc explanations.

Interpretable models are inherently transparent. Linear regression, decision trees, and rule-based systems let engineers see how each feature contributes to the outcome. But their simplicity often limits performance on complex tasks.

For higher-performing models like neural networks or gradient-boosted trees, post-hoc methods explain decisions after training. Feature importance scores, SHAP (SHapley Additive exPlanations), and LIME (Local Interpretable Model-agnostic Explanations) are widely used. SHAP calculates contribution values for each feature based on cooperative game theory, while LIME approximates the model locally with a simpler surrogate. These techniques offer insight into which inputs influenced a prediction most.

In image or text classification, visual techniques help. Heatmaps and saliency maps show which parts of an image drove the decision. In natural language processing, attention weight visualizations can reveal which words carried more influence.

Causal explanation methods are emerging as well. Unlike correlation-based techniques, they explore cause-and-effect relationships, offering more actionable insights. For engineers, these approaches can help assess whether a model’s decisions would hold if inputs changed in meaningful ways.

XAI comes with challenges that engineers must navigate. One key trade-off is between explainability and accuracy. Simpler, more interpretable models can perform worse on complex data, while opaque models often excel in predictive power. Balancing these priorities is a constant part of the engineering process.

Audience expectations also matter. Different groups require different levels of detail. A technical team might want detailed feature interactions, while end-users need clear, simple explanations they can act on. Crafting explanations that are both faithful to the model and easy to understand is not always straightforward.

Engineers should remain cautious about overconfidence in explanations. Many post-hoc techniques approximate rather than reveal exact reasoning. For instance, a feature importance plot might overstate a variable’s role without showing whether it truly causes the outcome. Recognizing these limitations helps avoid misinterpretation.

Scalability presents another hurdle. Explaining a handful of predictions is feasible, but applying explanation techniques in real time, across millions of decisions, demands efficient methods. Engineers must consider how well their chosen XAI approaches fit within production systems.

Finally, XAI itself is a growing field without fixed standards. New techniques and benchmarks continue to emerge. Engineers need to stay updated, evaluating each method critically and adjusting their approach as tools evolve.

Explainable Artificial Intelligence is shaping the way AI and ML engineers approach model development. As models become even more powerful and integrated into daily decision-making, the demand for transparency will only grow. Engineers who understand and apply XAI techniques are better positioned to build models that are not just accurate but also trustworthy and fair.

Future advancements in XAI are likely to make explanations more accurate, scalable, and context-aware. Hybrid approaches that combine the predictive strength of complex models with the interpretability of simpler models are already gaining traction. Advances in causal inference, human-in-the-loop systems, and standardized interpretability metrics will give engineers even more tools to work with.

For engineers, XAI is more than just another technical tool — it represents a mindset shift toward accountability and human-centered AI. Making models explainable helps bridge the gap between machines and people, aligning predictions more closely with human values and expectations.

By understanding the principles, techniques, and trade-offs of XAI, engineers can design systems that not only perform well but are also understood and trusted by those who use them.

Explainable Artificial Intelligence has become an essential skill for AI and ML engineers. As machine learning influences more decisions that affect people directly, the ability to explain predictions accurately carries significant weight. XAI supports trust, improves reliability, and helps meet growing legal and ethical demands for transparency. It also enables engineers to refine their models by exposing hidden biases or flaws. By weaving explainability into their workflow, engineers build AI that earns confidence and serves people better. With a thoughtful approach to XAI, engineers can design systems that combine intelligence with clarity, making their work more impactful and responsible.

Advertisement

Learn how Redis OM for Python transforms Redis into a model-driven, queryable data layer with real-time performance. Define, store, and query structured data easily—no raw commands needed

Struggling with a small dataset? Learn practical strategies like data augmentation, transfer learning, and model selection to build effective machine learning models even with limited data

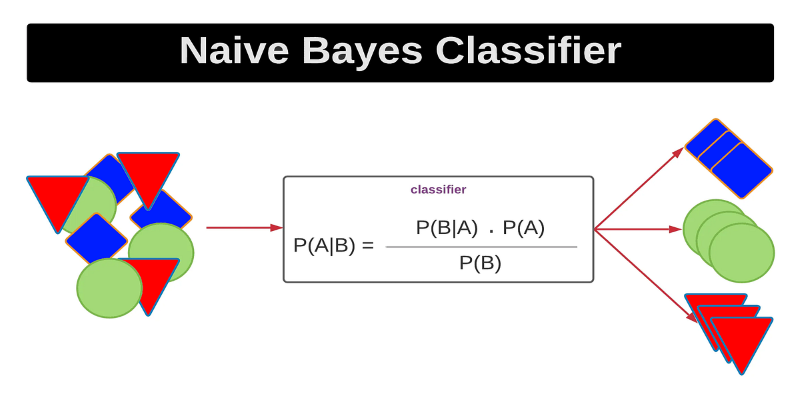

Curious how a simple algorithm can deliver strong ML results with minimal tuning? This beginner’s guide breaks down Naive Bayes—its logic, types, code examples, and where it really shines

How Sempre Health is accelerating its ML roadmap with the help of the Expert Acceleration Program, improving model deployment, patient outcomes, and internal efficiency

Heard of Julia but unsure what it offers? Learn why this fast, readable language is gaining ground in data science—with real tools, clean syntax, and powerful performance for big tasks

Learn how to create a Telegram bot using Python with this clear, step-by-step guide. From getting your token to writing commands and deploying your bot, it's all here

Learn how to impute missing dates in time series datasets using Python and pandas. This guide covers reindexing, filling gaps, and ensuring continuous timelines for accurate analysis

How explainable artificial intelligence helps AI and ML engineers build transparent and trustworthy models. Discover practical techniques and challenges of XAI for engineers in real-world applications

Explore Proximal Policy Optimization, a widely-used reinforcement learning algorithm known for its stable performance and simplicity in complex environments like robotics and gaming

The Hugging Face Fellowship Program offers early-career developers paid opportunities, mentorship, and real project work to help them grow within the inclusive AI community

How Summer at Hugging Face brings new contributors, open-source collaboration, and creative model development to life while energizing the AI community worldwide

How to train and fine-tune sentence transformers to create high-performing NLP models tailored to your data. Understand the tools, methods, and strategies to make the most of sentence embedding models