Advertisement

Telegram bots aren’t as mysterious as they sound. In fact, with just a bit of Python and a clear set of steps, you can make your own bot from scratch—even if you’ve never touched the Telegram API before. These bots can respond to messages, deliver updates, run commands, or even just send memes when you're feeling bored. So, if you're curious to see how it all works, this is exactly where you want to be.

Before we write a single line of code, we need Telegram’s blessing—aka a bot token. That starts with talking to a bot named @BotFather. And no, you don’t need to install anything yet.

And that’s it for the first phase. You now officially own a Telegram bot.

Now we get into the fun part—writing code. If you don’t have Python installed, just grab the latest version from python.org. Once that’s done, you’ll also need a package that makes interacting with Telegram easier.

We’re going to use python-telegram-bot, a clean and beginner-friendly library.

Open your terminal or command prompt and run:

bash

CopyEdit

pip install python-telegram-bot

This gives you everything you need to get started. No long setup. No overwhelming list of dependencies. Just one simple install, and you're in business.

Now that you've got the token and the package installed, it's time to write the actual bot. Start with a basic script—one that replies when someone sends a message.

Create a new Python file, say my_telegram_bot.py, and paste in this code:

python

CopyEdit

from telegram.ext import ApplicationBuilder, CommandHandler, MessageHandler, filters

# Replace 'YOUR_TOKEN_HERE' with your actual bot token

BOT_TOKEN = 'YOUR_TOKEN_HERE'

async def start(update, context):

await update.message.reply_text('Hello! I\'m your bot, ready to serve.')

async def echo(update, context):

user_text = update.message.text

await update.message.reply_text(f'You said: {user_text}')

def main():

app = ApplicationBuilder().token(BOT_TOKEN).build()

app.add_handler(CommandHandler('start', start))

app.add_handler(MessageHandler(filters.TEXT & ~filters.COMMAND, echo))

app.run_polling()

if __name__ == '__main__':

main()

Here's what this script does:

Simple? Absolutely. But powerful enough to build on later.

Once your bot is live, you can start making it do more than just parrot what people say. You could add custom commands, reply with stickers, or even call APIs to get real-time data.

Let’s say you want to add a command that gives the current time. Here’s how you’d do it:

python

CopyEdit

import datetime

async def time_now(update, context):

now = datetime.datetime.now().strftime('%Y-%m-%d %H:%M:%S')

await update.message.reply_text(f'Current time is: {now}')

Then, register this new command just like the /start one:

python

CopyEdit

app.add_handler(CommandHandler('time', time_now))

Or, maybe you want your bot to throw in a bit of humor. Here’s a quick addition:

python

CopyEdit

import random

jokes = [

"Why did the programmer quit his job? Because he didn't get arrays.",

"Why do Java developers wear glasses? Because they can’t C#.",

"I told my computer I needed a break, and it said, 'No problem, I'll go to sleep.'"

]

async def joke(update, context):

await update.message.reply_text(random.choice(jokes))

app.add_handler(CommandHandler('joke', joke))

Now whenever someone sends /joke, they'll get a random tech joke, which might just make debugging a little less painful.

Even simple bots run into issues—invalid inputs, dropped connections, or unhandled commands. If you're not catching those, your script might crash unexpectedly. A better approach is to anticipate those hiccups and handle them quietly in the background.

To log and deal with errors, you can add an error handler like this:

python

CopyEdit

import logging

from telegram.error import TelegramError

logging.basicConfig(

format='%(asctime)s - %(name)s - %(levelname)s - %(message)s',

level=logging.INFO

)

async def error_handler(update, context):

logging.error(msg="Exception while handling an update:", exc_info=context.error)

app.add_error_handler(error_handler)

This setup doesn't just keep your bot alive during unexpected failures—it also helps you figure out what went wrong by recording full error details. Once you’ve got logging in place, debugging becomes way less frustrating, especially as your bot grows.

Running your bot locally is fine for testing, but it shuts down the moment you close your laptop. If you want your bot available 24/7, you’ll need to host it somewhere reliable.

One of the easiest options is to use PythonAnywhere or Render. Both offer simple web-based environments where you can upload your code and run it without keeping your device on.

Here's the general idea:

For more control or larger bots, you might consider VPS hosting with services like Linode or DigitalOcean. Either way, hosting keeps your bot responsive and active for your users around the clock.

Telegram bots don’t require rocket science or massive frameworks. With a token, a few lines of Python, and a little time, you can build one that suits your needs exactly. Whether it’s for fun, utility, or simply curiosity, the whole thing is approachable and clean. You’re not wading through bloated configs or messy dependencies—just writing scripts that work.

The best part? You now know how to go from zero to a functioning Telegram bot without any unnecessary complexity. Keep that token safe, keep your scripts organized, and feel free to keep adding new features as you go. You started this with nothing more than a Telegram app and an idea. And now? You’ve got a bot, responding in real time, built entirely with your own code. That’s a win.

Advertisement

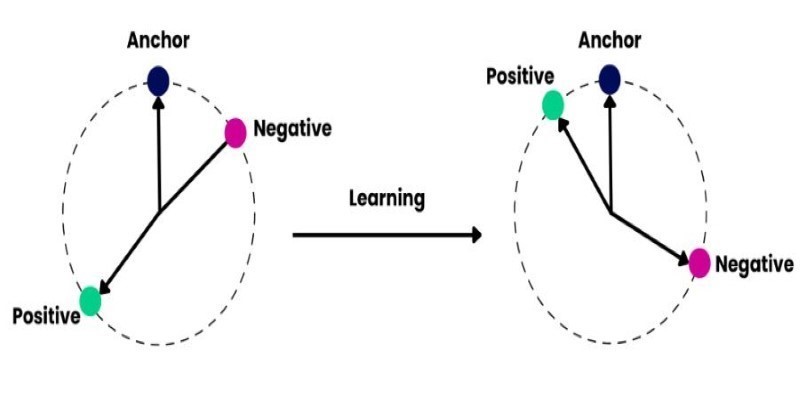

How to train and fine-tune sentence transformers to create high-performing NLP models tailored to your data. Understand the tools, methods, and strategies to make the most of sentence embedding models

Struggling with a small dataset? Learn practical strategies like data augmentation, transfer learning, and model selection to build effective machine learning models even with limited data

Learn how to impute missing dates in time series datasets using Python and pandas. This guide covers reindexing, filling gaps, and ensuring continuous timelines for accurate analysis

The Hugging Face Fellowship Program offers early-career developers paid opportunities, mentorship, and real project work to help them grow within the inclusive AI community

Explore the sigmoid function, how it works in neural networks, why its derivative matters, and its continued relevance in machine learning models, especially for binary classification

Learn how Redis OM for Python transforms Redis into a model-driven, queryable data layer with real-time performance. Define, store, and query structured data easily—no raw commands needed

Improve automatic speech recognition accuracy by boosting Wav2Vec2 with an n-gram language model using Transformers and pyctcdecode. Learn how shallow fusion enhances transcription quality

How accelerated inference using Optimum and Transformers pipelines can significantly improve model speed and efficiency across AI tasks. Learn how to streamline deployment with real-world gains

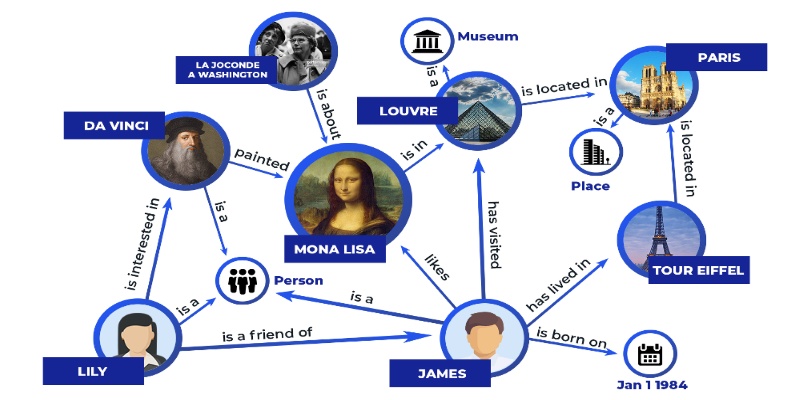

Discover how knowledge graphs work, why companies like Google and Amazon use them, and how they turn raw data into connected, intelligent systems that power search, recommendations, and discovery

Curious how to build your first serverless function? Follow this hands-on AWS Lambda tutorial to create, test, and deploy a Python Lambda—from setup to CloudWatch monitoring

Looking for the next big thing in Python development? Explore upcoming libraries like PyScript, TensorFlow Quantum, FastAPI 2.0, and more that will redefine how you build and deploy systems in 2025

Explore Proximal Policy Optimization, a widely-used reinforcement learning algorithm known for its stable performance and simplicity in complex environments like robotics and gaming