Advertisement

When building interactive applications that rely on visual or audio inputs, one of the biggest hurdles is simplifying machine learning integrations. You don’t want to be knee-deep in model optimization, pre-processing routines, or debugging TensorFlow graphs when all you really want is a working face detector or hand tracker. This is where Google’s Mediapipe Tasks API steps in — clean, focused, and efficient. If you’ve ever tried implementing real-time ML in projects, you’ll understand the relief this API brings.

The Mediapipe Tasks API is a collection of high-level solutions built on top of Mediapipe, designed specifically to perform common machine learning tasks, without the need for writing a deep learning model from scratch. These tasks include things like object detection, gesture recognition, face landmarking, text classification, and audio analysis. The main point? You get working ML features straight out of the box. Just feed in your input, and get structured, ready-to-use results in return.

But here’s the kicker — it doesn’t bury you under layers of abstraction. You still get access to meaningful output and hooks for customization if needed. And the setup? Surprisingly minimal. You don’t even need to spend hours preparing your environment or optimizing pipeline performance. It’s all handled.

The Tasks API isn’t trying to impress with complexity. It gives you what you need — structured, reliable results — without asking for much in return. You don’t have to train your own models or dig through low-level layers to get useful outcomes.

Every task comes with a pre-trained TensorFlow Lite model. These are already optimized for edge and mobile performance, so you get real-time feedback even on modest devices. There’s no need to handle extra optimization steps — just load the model and start working with it.

Whether you're developing in Python, Android, or web, the way you access and use each task stays largely the same. That consistency saves time when moving between prototypes and deployments.

Each task gives you structured, human-readable results — categories, bounding boxes, and landmarks — instead of raw probabilities or unprocessed tensors. It's built for developers who want clean integration, not decoding pipelines.

Let's move past the theory and into what actually matters: getting it running. The following steps show how to integrate the Mediapipe Tasks API into a Python project, but similar logic applies to other supported environments.

First things first. You’ll need to install the Mediapipe package via pip. Make sure your Python environment is up-to-date.

bash

CopyEdit

pip install mediapipe

That’s the only package you need. No TensorFlow setup, no extra model files for basic tasks.

Let’s say you want to perform image classification. Mediapipe Tasks API provides a dedicated module just for that.

python

CopyEdit

from mediapipe.tasks import python

from mediapipe.tasks.python import vision

You’ll then load the model like this:

python

CopyEdit

model_path = 'efficientnet_lite0.tflite'

classifier = vision.ImageClassifier.create_from_model_path(model_path)

Just supply the path to a TensorFlow Lite model — Mediapipe handles the rest, including preprocessing.

The API expects specific input formats, so convert your image using Mediapipe’s utilities:

python

CopyEdit

from PIL import Image

import numpy as np

img = Image.open('test.jpg').convert('RGB')

mp_image = mp.Image(image_format=mp.ImageFormat.SRGB, data=np.array(img))

This step ensures your image is in the right shape and color format. It’s important for accuracy.

Now, run the model on your input and grab the results.

python

CopyEdit

result = classifier.classify(mp_image)

for category in result.classifications[0].categories:

print(category.category_name, category.score)

Within seconds, you get a clean list of classifications with scores. No post-cleaning, no decoding of raw tensors. That simplicity matters a lot when you’re on a deadline.

You’re not limited to image classification. Mediapipe offers a growing list of pre-defined tasks under the same streamlined API structure. Here are some notable ones:

If you're looking to identify multiple items in a frame, object detection is your go-to. The Tasks API wraps this into a neat detector that outputs bounding boxes, confidence scores, and labels — all with a few lines of code.

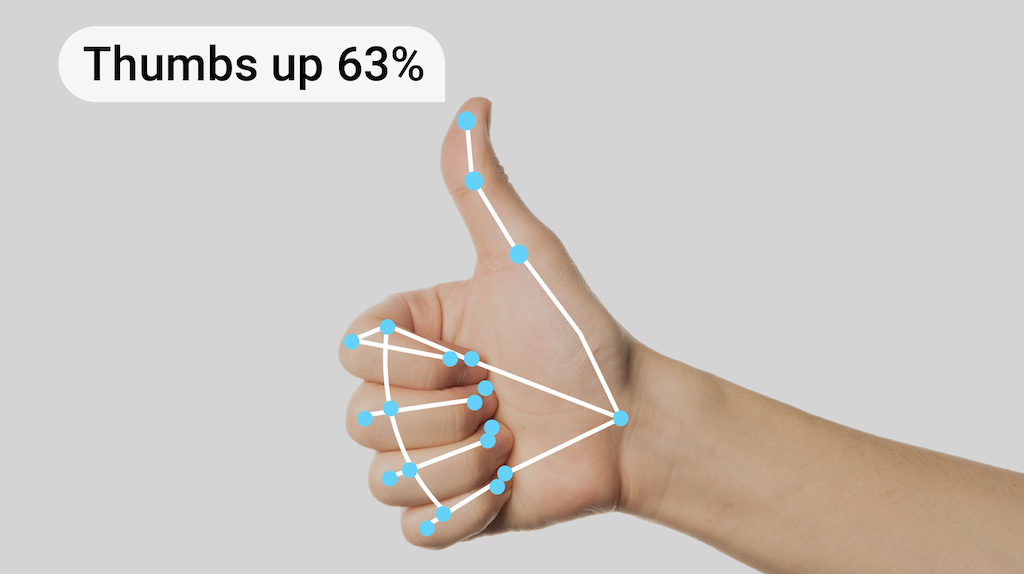

Perfect for gesture-based apps or anything that needs body motion analysis. These tasks provide multiple landmarks across hands or full-body skeletons, again in real-time and with excellent performance.

Although less visually flashy, these are powerful additions. You can classify text strings (like sentiment analysis or keyword tagging) and even process real-time audio to detect specific patterns or trigger alerts.

Each of these comes with similar ease-of-use — you select the task, plug in your input, and receive structured output.

Whether you're building a mobile app for fitness tracking, an interactive game that responds to hand gestures, or a customer support tool that scans user feedback for keywords, these task modules simplify the work.

For example, in a fitness app, pose tracking can detect correct form in exercises without manual labeling or traditional computer vision code. In a sign language translator, hand landmarking and gesture classification can be chained together. In security systems, object detection can flag unusual movements or items in a camera feed.

The best part? You don’t need to be an ML engineer. The Tasks API makes it approachable for general developers, designers, and researchers.

If you've been avoiding real-time ML tasks because they seemed too technical, the Mediapipe Tasks API might be your entry point. It doesn't ask for custom models or deep ML knowledge. It doesn't take hours to set up. And it works on devices that most users already own. That's a lot of value in a tiny wrapper. So, whether you're building a production tool or just testing out an idea, this API offers you a way to make it happen quickly and reliably.

Advertisement

Curious how to build your first serverless function? Follow this hands-on AWS Lambda tutorial to create, test, and deploy a Python Lambda—from setup to CloudWatch monitoring

Confused about where your data comes from? Discover how data lineage tracks every step of your data’s journey—from origin to dashboard—so teams can troubleshoot fast and build trust in every number

Are you running into frustrating bugs with PyTorch? Discover the common mistakes developers make and learn how to avoid them for smoother machine learning projects

Learn how to simplify machine learning integration using Google’s Mediapipe Tasks API. Discover its key features, supported tasks, and step-by-step guidance for building real-time ML applications

Confused about DAO and DTO in Python? Learn how these simple patterns can clean up your code, reduce duplication, and improve long-term maintainability

Explore Proximal Policy Optimization, a widely-used reinforcement learning algorithm known for its stable performance and simplicity in complex environments like robotics and gaming

The Hugging Face Fellowship Program offers early-career developers paid opportunities, mentorship, and real project work to help them grow within the inclusive AI community

How BERT, a state of the art NLP model developed by Google, changed language understanding by using deep context and bidirectional learning to improve natural language tasks

Looking for the next big thing in Python development? Explore upcoming libraries like PyScript, TensorFlow Quantum, FastAPI 2.0, and more that will redefine how you build and deploy systems in 2025

How accelerated inference using Optimum and Transformers pipelines can significantly improve model speed and efficiency across AI tasks. Learn how to streamline deployment with real-world gains

Could one form field expose your entire database? Learn how SQL injection attacks work, what damage they cause, and how to stop them—before it’s too late

How explainable artificial intelligence helps AI and ML engineers build transparent and trustworthy models. Discover practical techniques and challenges of XAI for engineers in real-world applications