Advertisement

BigQuery might sound complex at first glance, but once you peel back a few layers, you'll realize it’s one of those tools built to make heavy-lifting feel light. Whether you're crunching billions of rows or trying to figure out what caused last month’s dip in sales, BigQuery is the quiet powerhouse doing the math behind the scenes. It doesn’t shout for attention, but it gets the job done — and fast.

The first thing you'll realize when you start working with BigQuery is that it doesn't behave like your typical database. You're not purchasing hardware, configuring clusters, or babysitting storage quotas. It's a serverless data warehouse, that is. You don't manage infrastructure — you simply submit your SQL and receive answers. And that's not a simplification — that's the idea.

Under the hood, BigQuery runs on the Dremel engine — Google’s own technology that splits queries into smaller pieces, runs them in parallel, and stitches the result together almost instantly. So, even if your dataset is huge, your response time is usually measured in seconds.

The columnar storage structure is also worth mentioning. Rather than storing data row by row as most legacy databases do, BigQuery stores it column-wise. This allows it to be lightning-fast to read only the data that actually matters to your query. It's similar to requesting from a library just the list of names of all the authors of a particular year and having someone give you only names, not the entire book.

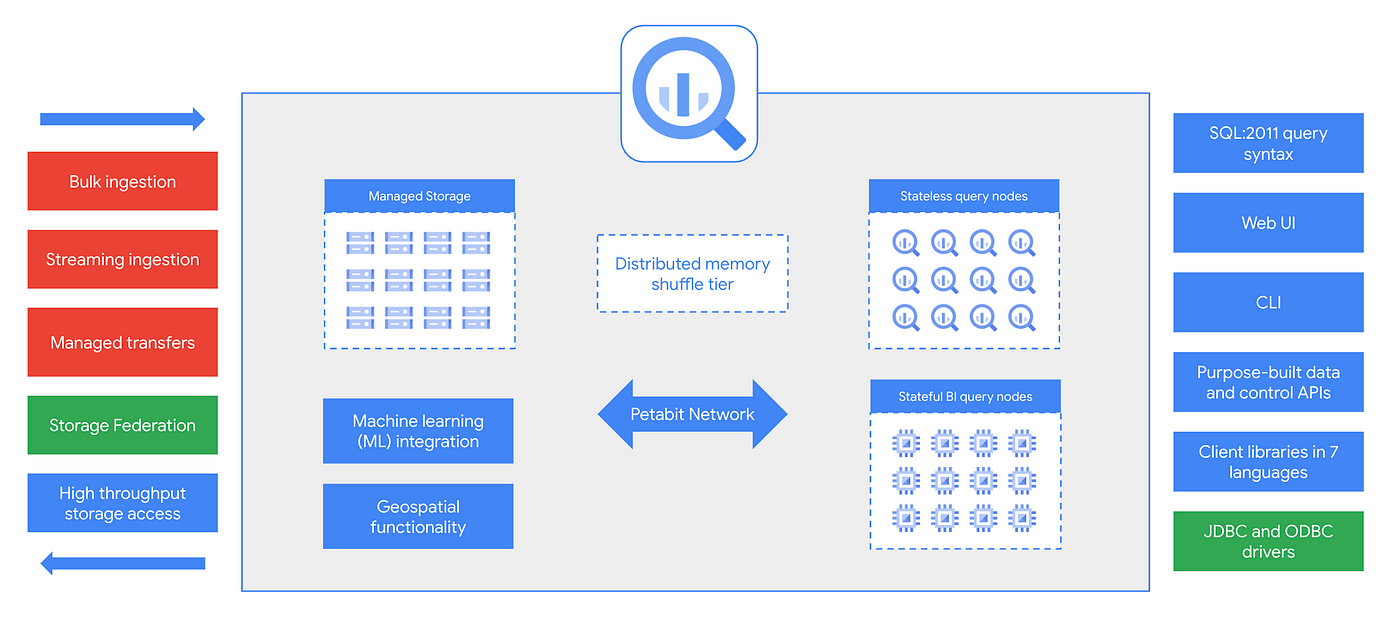

BigQuery’s architecture is a blend of several moving parts, but each is designed with performance and simplicity in mind. Here's how everything fits together:

All data in BigQuery is stored in a columnar format within something called Colossus — Google’s distributed file system. This separation of storage from compute is what makes BigQuery flexible. You can store petabytes of data and analyze it without worrying about provisioning resources ahead of time.

Data is automatically compressed, encrypted, and replicated. And since you’re billed for how much you scan rather than how much you store (unless you opt for long-term storage pricing), there’s an incentive to structure your queries smartly.

The engine behind your queries is Dremel. Whenever you submit a query, BigQuery breaks it down into stages and spreads the work across thousands of nodes. These nodes work in parallel, reading only the necessary columns and applying the logic you’ve written in SQL. That’s why even a basic query over a billion rows can return almost instantly.

Another key point here is that the compute layer is fully managed. You don’t need to spin up VMs or worry about scaling. Everything expands and contracts based on the job at hand.

BigQuery uses standard SQL, which makes adoption easier if you’ve worked with databases before. But it goes a step further. Features like user-defined functions, table-valued functions, and procedural language support give you the flexibility to write complex logic without moving data elsewhere.

The engine supports federated queries, too. That means you can query data from external sources — like Cloud Storage, Bigtable, or even Sheets — without importing it into BigQuery first. It’s not just fast; it’s convenient.

Data isn’t useful unless it’s secure. BigQuery integrates with Identity and Access Management (IAM) so you can set fine-grained controls on who can access which datasets or tables. And everything is encrypted both at rest and in transit, by default. There's also support for row-level security and column-level access policies if you want even more precision.

So, where does BigQuery actually shine? Let’s look at how different teams use it in real-world situations.

Think of an e-commerce app tracking every click, scroll, and purchase. That’s a mountain of data streaming in every second. BigQuery is well-suited to handle this kind of event-level data. You can run real-time dashboards, detect anomalies, and even feed predictions back into the app — all from the same warehouse.

It’s not unusual for product managers and data analysts to build daily reports on user retention, funnel performance, and average cart size — all running on top of BigQuery datasets.

Marketers love answers — and they want them yesterday. BigQuery helps by consolidating data from ad platforms, CRM tools, web analytics, and more. It gives a full view of the customer journey, from first touch to conversion.

Once the data’s in, you can measure return on ad spend (ROAS), build multi-touch attribution models, or even run customer segmentation models that power personalization efforts.

Finance teams often rely on rigid tools that can’t scale with growing data. But with BigQuery, they can automate reporting on metrics like revenue, cost, margin, and forecast accuracy without manual effort. And when regulations demand transparency or auditability, the built-in logging and access control features come in handy.

Moreover, you can connect BigQuery directly to tools like Looker Studio or Tableau for rich, shareable dashboards that update as your data does.

One of the less obvious but incredibly useful features of BigQuery is BigQuery ML. This lets you train, evaluate, and predict models directly inside the data warehouse — using SQL.

You don’t need to export your data to a separate machine learning pipeline. Everything from linear regression to k-means clustering happens where your data already lives. And yes, it works even if you’re not a data scientist — as long as you can write a SQL query, you can build a model.

BigQuery doesn’t demand your attention, but it earns your trust. It’s designed for teams who want answers fast and don’t want to worry about provisioning servers or tuning performance settings. The architecture separates storage and compute so cleanly that scaling just happens. And the use cases span every department — from product to finance to marketing.

Whether you're analyzing terabytes of web logs or just trying to run a cleaner monthly revenue report, BigQuery helps you get there with minimal fuss. It’s a workhorse, not a show pony — and that’s exactly why so many teams rely on it.

Advertisement

Are you running into frustrating bugs with PyTorch? Discover the common mistakes developers make and learn how to avoid them for smoother machine learning projects

Learn how to create a Telegram bot using Python with this clear, step-by-step guide. From getting your token to writing commands and deploying your bot, it's all here

Explore the sigmoid function, how it works in neural networks, why its derivative matters, and its continued relevance in machine learning models, especially for binary classification

Improve automatic speech recognition accuracy by boosting Wav2Vec2 with an n-gram language model using Transformers and pyctcdecode. Learn how shallow fusion enhances transcription quality

Heard of Julia but unsure what it offers? Learn why this fast, readable language is gaining ground in data science—with real tools, clean syntax, and powerful performance for big tasks

Could one form field expose your entire database? Learn how SQL injection attacks work, what damage they cause, and how to stop them—before it’s too late

Discover how Google BigQuery revolutionizes data analytics with its serverless architecture, fast performance, and versatile features

Prepare for your Snowflake interview with key questions and expert answers covering Snowflake architecture, virtual warehouses, time travel, micro-partitions, concurrency, and more

The White House has introduced new guidelines to regulate chip licensing and AI systems, aiming to balance innovation with security and transparency in these critical technologies

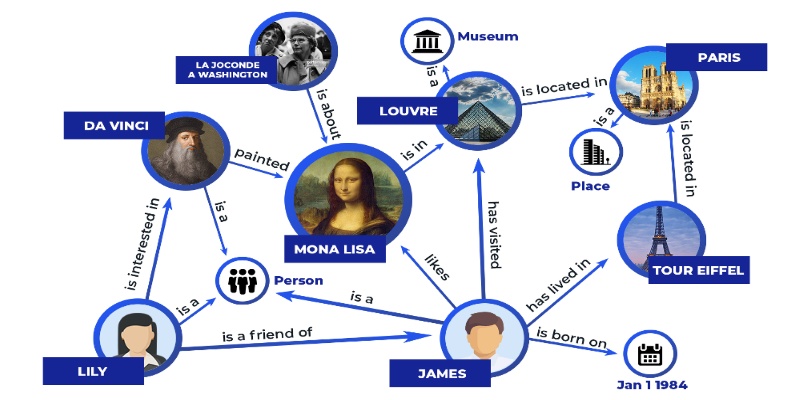

Discover how knowledge graphs work, why companies like Google and Amazon use them, and how they turn raw data into connected, intelligent systems that power search, recommendations, and discovery

How Summer at Hugging Face brings new contributors, open-source collaboration, and creative model development to life while energizing the AI community worldwide

Explore Proximal Policy Optimization, a widely-used reinforcement learning algorithm known for its stable performance and simplicity in complex environments like robotics and gaming